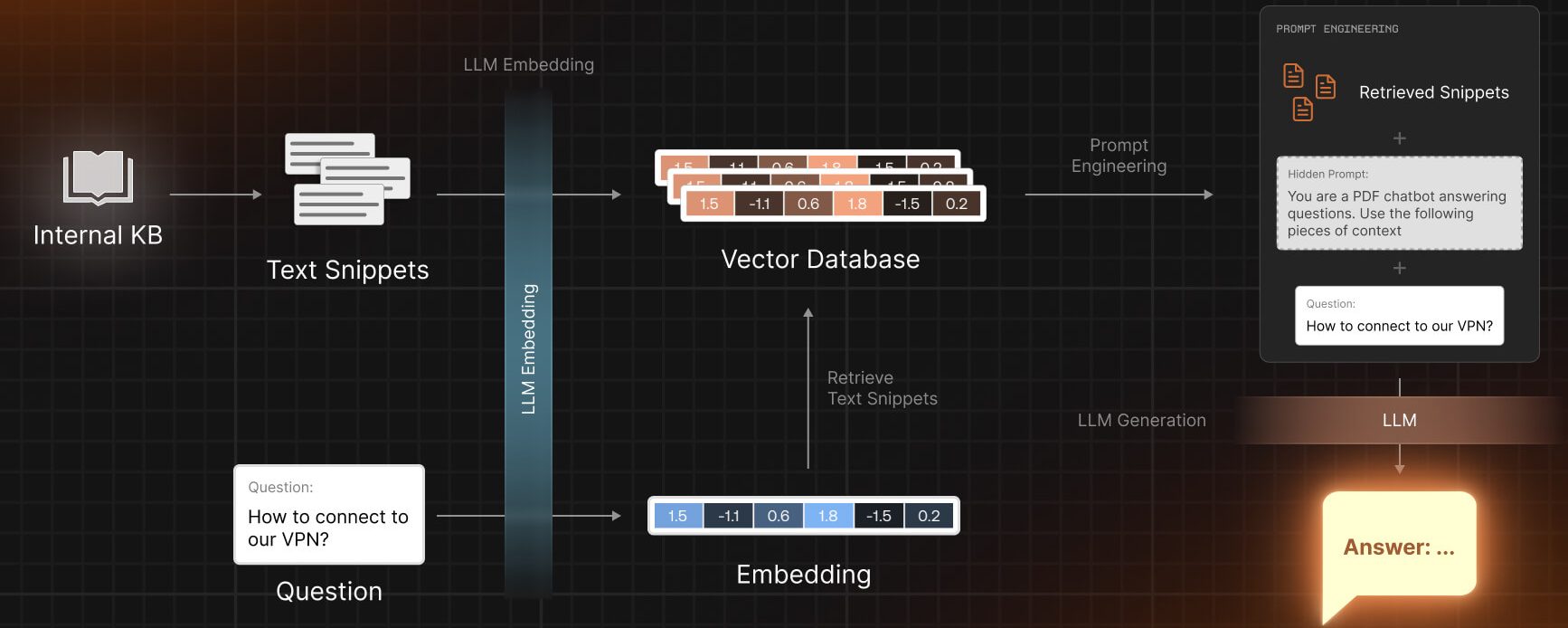

Embeddings are a fundamental concept in the field of natural language processing (NLP) and machine learning. They are mathematical representations of words or phrases that capture their semantic meaning. In other words, embeddings are a way to convert text into numerical vectors that can be understood and processed by machine learning algorithms.

In this article, we will explore the concept of embeddings in more detail. We will discuss how embeddings are created, the different types of embeddings that exist, and their applications in NLP tasks such as sentiment analysis, language translation, and document classification. Additionally, we will delve into popular embedding models like Word2Vec and GloVe, and examine how they have revolutionized the field of NLP. By the end of this article, you will have a solid understanding of what embeddings are and how they can be used to enhance the performance of NLP models.

Embeddings are a way to represent words or sentences as numerical vectors.

Embeddings are a fundamental concept in natural language processing (NLP) and machine learning. They are a way to represent words or sentences as numerical vectors. These vectors capture the semantic meaning of the words or sentences, allowing machines to understand and process human language.

Traditionally, words have been represented as one-hot vectors, where each word is represented by a vector with all zeros except for a single one at the index corresponding to the word’s position in the vocabulary. However, one-hot vectors are not effective in capturing the relationships between words or their semantic similarities.

Embeddings, on the other hand, encode the meaning of words by mapping them to dense vectors in a continuous vector space. This mapping is learned from large amounts of text data using techniques such as Word2Vec, GloVe, or FastText. These algorithms analyze the context in which words appear and create embeddings that capture the semantic relationships between words.

Once the embeddings are learned, they can be used in various NLP tasks such as text classification, sentiment analysis, machine translation, and more. By representing words as numerical vectors, embeddings enable machines to perform calculations and comparisons on words, making it easier to process and understand natural language.

Embeddings are not limited to just words. They can also be applied to represent sentences, documents, or any other text unit. Sentence embeddings, for example, can be used to measure the similarity between two sentences or to find sentences with similar meanings.

Embeddings have revolutionized the field of NLP and have significantly improved the performance of various language-related tasks. They have made it possible for machines to understand and generate human-like language, leading to advancements in chatbots, language translation, and voice assistants.

In conclusion, embeddings are a powerful technique in NLP that represent words or sentences as numerical vectors. They capture the semantic meaning of the text and enable machines to process and understand human language more effectively. With the advancements in embedding algorithms, we can expect further improvements in language-related applications and the development of more intelligent systems.

They capture the meaning and context of words in a high-dimensional space.

Embeddings are a fundamental concept in the field of natural language processing (NLP) and are widely used in various applications such as machine translation, sentiment analysis, and text classification. But what exactly are embeddings?

Embeddings can be thought of as a way to represent words or phrases in a numerical form that captures their meaning and context. Instead of treating words as discrete symbols, embeddings map them to a high-dimensional space where their relationships and similarities can be measured mathematically.

Imagine a world where words are represented as points in a multi-dimensional space. Words that have similar meanings or are used in similar contexts are closer to each other in this space. For example, the words “cat” and “dog” would be closer to each other than the words “cat” and “car”. This notion of proximity allows us to capture the semantic relationships between words.

One popular method for generating word embeddings is Word2Vec, which is based on the idea that words that appear in similar contexts often have similar meanings. Word2Vec uses a neural network to learn these relationships by predicting the surrounding words given a target word.

Another method is GloVe (Global Vectors for Word Representation), which combines the advantages of both global and local word co-occurrence statistics. GloVe constructs a co-occurrence matrix from a large corpus of text and uses matrix factorization techniques to generate word embeddings.

Once we have these word embeddings, we can use them as features in various NLP tasks. For example, in sentiment analysis, we can train a machine learning model using word embeddings to classify text as positive or negative based on the sentiment conveyed by the words.

Embeddings have revolutionized the field of NLP by enabling computers to understand and process human language more effectively. They have also paved the way for advancements in machine translation, information retrieval, and even chatbots.

In conclusion, embeddings are a powerful tool in NLP that allow us to capture the meaning and context of words in a high-dimensional space. They have proven to be invaluable in various applications and have greatly improved the performance of NLP models. With the advancements in deep learning and neural networks, we can expect even more sophisticated embeddings that capture the nuances of language with even greater accuracy.

They are often used in natural language processing tasks such as sentiment analysis or machine translation.

Embeddings are a fundamental concept in the field of artificial intelligence and natural language processing. They are a way to represent words, phrases, or even entire documents as numerical vectors in a high-dimensional space. These numerical representations capture the semantic meaning of the text and enable machine learning models to understand and process language.

Embeddings are often used in various natural language processing tasks, such as sentiment analysis, machine translation, text classification, and information retrieval. They provide a way to transform raw text into a format that can be easily understood and processed by machine learning algorithms.

One of the key advantages of using embeddings is that they can capture the semantic relationships between words. For example, words that have similar meanings or are often used in similar contexts will have similar vector representations in the embedding space. This allows machine learning models to generalize from known examples to unseen words or phrases.

There are different techniques and algorithms to generate word embeddings. One popular approach is Word2Vec, which uses a neural network to learn word embeddings from large amounts of text data. Another widely used method is GloVe, which combines global matrix factorization with local context windows to create word embeddings.

Embeddings can also be pre-trained on large corpora of text data and then fine-tuned on specific tasks. This allows models to leverage the knowledge encoded in the pre-trained embeddings and adapt them to the specific requirements of the task at hand.

Overall, embeddings are a powerful tool in natural language processing and enable machines to understand and process text data. They play a crucial role in tasks such as sentiment analysis, machine translation, and text classification. By representing words and phrases as numerical vectors, embeddings allow machine learning models to capture the semantic meaning of the text and make accurate predictions or classifications.

Word embeddings are learned from large amounts of text data using techniques like Word2Vec or GloVe.

Word embeddings are a crucial concept in natural language processing (NLP) and machine learning. They are numerical representations of words that capture their semantic meaning and relationships. In simple terms, word embeddings are like fingerprints for words, allowing computers to understand and process language in a more meaningful way.

There are different techniques used to learn word embeddings, such as Word2Vec and GloVe. These techniques analyze large amounts of text data to map words to dense vectors in a high-dimensional space. The resulting vectors capture semantic relationships between words, making it possible to perform mathematical operations on them.

Word2Vec

Word2Vec is a popular algorithm used to learn word embeddings. It is based on the idea that words appearing in similar contexts have similar meanings. Word2Vec comes in two flavors: Continuous Bag-of-Words (CBOW) and Skip-gram.

In the CBOW model, the algorithm predicts a target word based on its context words. For example, given the sentence “The cat is playing with a ball,” the CBOW model would try to predict “cat” based on the surrounding words “The,” “is,” “playing,” “with,” and “a.”

In contrast, the Skip-gram model predicts the context words given a target word. Using the same example as before, the Skip-gram model would try to predict “The,” “is,” “playing,” “with,” and “a” based on the target word “cat.”

GloVe

GloVe, short for Global Vectors, is another popular algorithm for learning word embeddings. It combines global matrix factorization with local context window methods to capture both global and local word relationships. GloVe takes into account the co-occurrence statistics of words in a large corpus of text to learn word vectors.

Both Word2Vec and GloVe result in word embeddings that allow computers to understand the semantic relationships between words. These embeddings can be used in a variety of NLP tasks, such as sentiment analysis, machine translation, and text classification.

- Word embeddings capture semantic meaning and relationships between words.

- Word2Vec and GloVe are popular algorithms used to learn word embeddings.

- Word2Vec has two models: CBOW and Skip-gram.

- GloVe combines global matrix factorization with local context window methods.

- Word embeddings are used in various NLP tasks.

In conclusion, word embeddings are a powerful tool in NLP and machine learning. They allow computers to understand and process language in a more meaningful way by capturing the semantic relationships between words. Word2Vec and GloVe are two popular algorithms used to learn word embeddings, and they have been applied to various NLP tasks with great success.

Sentence embeddings are more complex and aim to capture the meaning of an entire sentence.

Sentence embeddings are a type of word embedding that go beyond just representing individual words. They aim to capture the meaning of an entire sentence or text. This is especially useful in natural language processing tasks where the context and semantics of a sentence are important.

One popular method for generating sentence embeddings is by using pre-trained models such as BERT (Bidirectional Encoder Representations from Transformers). BERT is a state-of-the-art language model developed by Google that has been trained on a large corpus of text data. It can generate high-quality sentence embeddings by taking into account the surrounding words and their relationships.

Another approach to generating sentence embeddings is by using recurrent neural networks (RNNs). RNNs are a type of artificial neural network that can process sequential data such as sentences. They can capture the dependencies between words in a sentence and generate meaningful sentence embeddings.

Sentence embeddings can be used in a variety of applications. One common use case is in text classification, where the goal is to classify a given text into predefined categories. By using sentence embeddings, the model can understand the overall meaning of the text and make more accurate predictions.

Another application of sentence embeddings is in information retrieval, where the goal is to retrieve relevant documents or sentences given a query. By comparing the embeddings of the query and the documents, the model can rank the documents based on their similarity to the query.

In conclusion, sentence embeddings are a powerful tool in natural language processing that can capture the meaning of an entire sentence. They can be generated using pre-trained models like BERT or by using recurrent neural networks. Sentence embeddings have various applications, including text classification and information retrieval.

Frequently Asked Questions

What are Embeddings?

Embeddings are a way to represent words or objects as numerical vectors in a high-dimensional space.

How are Embeddings generated?

Embeddings are generated using techniques like word2vec or GloVe, which learn the vector representations based on the context of the words.

What are the applications of Embeddings?

Embeddings are widely used in natural language processing tasks like sentiment analysis, machine translation, and document classification.

Can Embeddings be used for non-text data?

Yes, embeddings can also be used to represent non-text data like images or audio by converting them into numerical vectors.