Exploring the Intersection of Linguistics and Machine Learning

In the realm of natural language processing (NLP), one of the most revolutionary concepts is Word2vec. This technique, developed by a team of researchers led by Tomas Mikolov at Google, has transformed how machines understand human language. Word2vec is a method of converting words into numerical vectors, enabling computers to interpret, process, and utilize textual data in a more effective manner.

Introduction to Word2vec

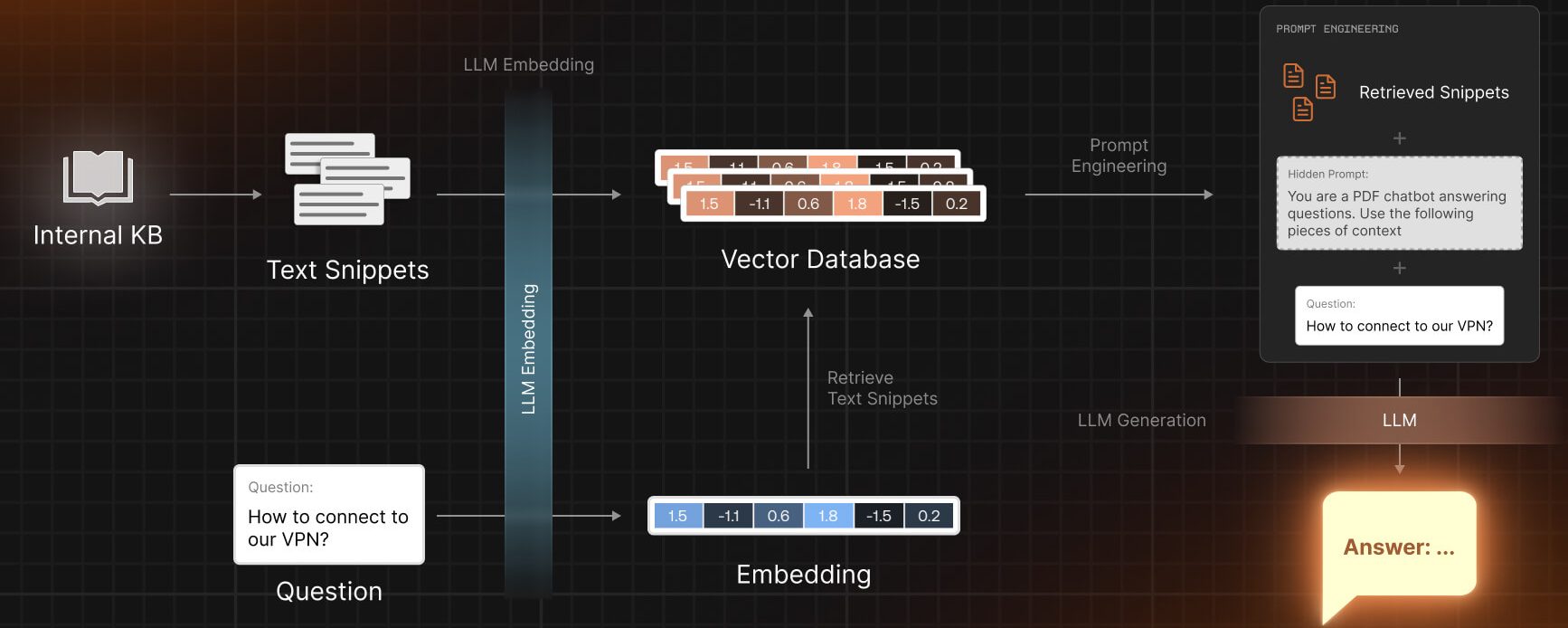

Word2vec is a group of related models used to produce word embeddings, which are vector representations of words. These models are shallow, two-layer neural networks trained to reconstruct linguistic contexts of words. By mapping words into a multi-dimensional space, Word2vec captures the essence of word meanings, relationships, and context in a way that machines can interpret.

How Word2vec Works

Word2vec uses two primary models: Continuous Bag-of-Words (CBOW) and Skip-Gram.

Continuous Bag-of-Words (CBOW)

- Functionality: Predicts a target word based on context words.

- Approach: Takes context words as input and tries to predict the word that is most likely to appear in that context.

- Usage: More efficient in terms of computational resources.

Skip-Gram

- Functionality: Predicts context words from a target word.

- Approach: Takes a word as input and predicts the surrounding context words.

- Usage: Better for larger datasets and captures more word relationships.

Both models work by sliding a window over the text data and training the neural network to predict words based on their contexts.

Importance of Word Embeddings

Word embeddings, the output of Word2vec, provide numerous benefits:

- Semantic Information: Captures semantic relationships between words, like synonyms, antonyms, and general semantic similarity.

- Dimensionality Reduction: Reduces the dimensionality of textual data, making it manageable for machine learning models.

- Contextual Relationships: Understands the context in which words appear, which is crucial for many NLP tasks.

Applications of Word2vec

Word2vec has a wide range of applications in the field of NLP:

- Sentiment Analysis: Helps in determining the sentiment of text data.

- Machine Translation: Assists in translating text from one language to another.

- Information Retrieval: Enhances search algorithms by understanding the semantic meaning of words.

- Text Classification: Improves the performance of classifiers by providing them with rich word representations.

Challenges and Limitations

Despite its effectiveness, Word2vec is not without limitations:

- Out-of-Vocabulary Words: Struggles with words not seen during training.

- Fixed Context Window: The fixed size of the context window might limit the understanding of longer dependencies.

- Homonyms and Polysemy: Has difficulties in differentiating meanings in words with multiple meanings.

Beyond Word2vec: Advances and Alternatives

The success of Word2vec has inspired the development of more advanced models like GloVe (Global Vectors for Word Representation) and BERT (Bidirectional Encoder Representations from Transformers). These models address some of the limitations of Word2vec and provide richer word embeddings.

Conclusion

Word2vec has been a cornerstone in the field of NLP, providing a pathway for machines to understand and process human language in a more nuanced and effective manner. While it has its limitations, the foundational principles of Word2vec continue to influence newer, more advanced techniques in the realm of text processing and machine understanding. The continued evolution of word embedding models promises even greater strides in the field of NLP, opening doors to more sophisticated and human-like understanding of language by machines.