Most teams building with generative AI eventually hit the same wall: the demo looked impressive, but production quality is unstable. One week users praise outputs, and the next week support tickets spike. The core issue is not just prompting or model choice. It is the lack of an evaluation framework that translates business expectations into measurable quality signals.

If your organization still aligns on basics, start with this AI fundamentals guide. Then move from ad-hoc testing to a framework where every release is compared against explicit criteria.

Why evaluation frameworks fail in practice

Many teams collect metrics, but few connect them to real decisions. A useful framework must answer three operational questions: is quality improving in tasks users actually care about, are we reducing risk, and is performance sustainable in cost and latency at target volume. Without this alignment, scorecards become vanity dashboards and teams optimize synthetic benchmarks while customer-facing workflows still degrade.

The four evaluation layers

A robust framework combines four layers, each with different ownership:

- Task quality layer: factuality, completeness, instruction adherence, and clarity.

- Safety and policy layer: prohibited content checks, privacy leakage, and compliance risk.

- Runtime layer: latency percentiles, retries, tool-call failure rates, and timeout patterns.

- Business layer: conversion, support deflection, time-to-resolution, and unit cost per successful task.

Prompt iteration only works reliably when this structure is stable. The process described in Prompt Engineering in Practice becomes much more effective when prompts are evaluated against all four layers, not only output style.

Choosing evaluation datasets

Your framework is only as good as your test set. A practical split looks like this: a golden set with human-validated examples, an adversarial set with edge cases, a freshness set from recent user intents, and a regression set with historical incidents that must never repeat. Keep datasets versioned and tie every run to a dataset hash. This avoids debates about whether quality changes came from model updates or from dataset drift.

Teams that run retrieval-heavy systems should also link dataset slices to context bundle versions. The context discipline outlined in this MCP practical guide makes this tracking straightforward and auditable.

Metrics that should be mandatory

- Answer correctness score (human + automated rubric blend).

- Groundedness score (claim-to-source alignment).

- Refusal quality score (safe refusals that remain helpful).

- Latency SLO compliance (P95/P99 by workflow tier).

- Cost per accepted output (tokens + tool usage + retries).

These metrics should be segmented by intent class, model version, and prompt version. Aggregate values hide long-tail failures, and long-tail failures are where trust breaks first.

Human evaluation without bottlenecks

Human review is necessary, but random manual scoring does not scale. Use calibrated rubrics with clear anchors (for example, 1–5 factuality and 1–5 actionability). Review statistically relevant samples plus high-risk slices. For sensitive workflows, route low-confidence outputs through a person-in-the-loop control instead of forcing full human review on all traffic.

Release gates that prevent silent regressions

Every model, prompt, or tool change should pass release gates before rollout:

- No critical safety regressions vs baseline.

- Task quality above threshold on golden and freshness sets.

- Latency and cost within allowed SLO budget.

- Incident regression set remains green.

If one gate fails, the release is blocked. This is where many teams improve reliability dramatically: not by hunting one perfect prompt, but by refusing to ship unverified changes.

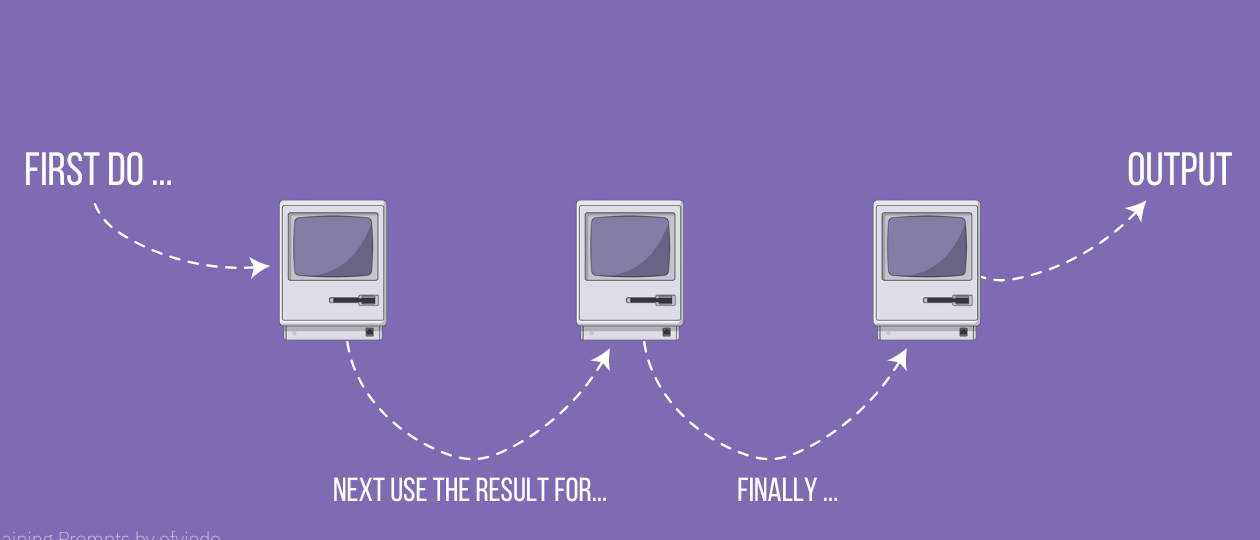

Operationalizing weekly evaluation

A simple weekly cadence works well. Monday runs the baseline battery. Wednesday runs targeted experiments. Friday closes with a decision review combining quality, runtime, and business impact. Store all runs with metadata: model version, prompt version, tool policy, dataset hash, and evaluator version. Over time, this evidence trail lets teams explain quality shifts to product and operations without speculation.

When evaluation is operationalized, teams can move faster with less risk. You stop debating impressions and start making decisions from reproducible evidence. This discipline is particularly important when organizations run multiple agent workflows across support, research, and internal automation.

Common mistakes to avoid

- Overfitting to one benchmark while ignoring real user-intent diversity.

- Using averages only and hiding long-tail failures.

- Treating safety checks as optional under deadline pressure.

- Ignoring runtime cost while celebrating marginal quality gains.

The strongest teams treat evaluation as part of delivery, not research overhead. A framework is valuable when it accelerates confident decisions and reduces rework.

Final takeaway

Evaluation frameworks for generative AI are the bridge between prototype excitement and production reliability. Define layered metrics, version datasets, enforce release gates, and connect quality to business outcomes. Once evaluation becomes an operational habit, you ship better systems with less downtime and fewer regressions.